By Gaurav Yadav, Second year, Law

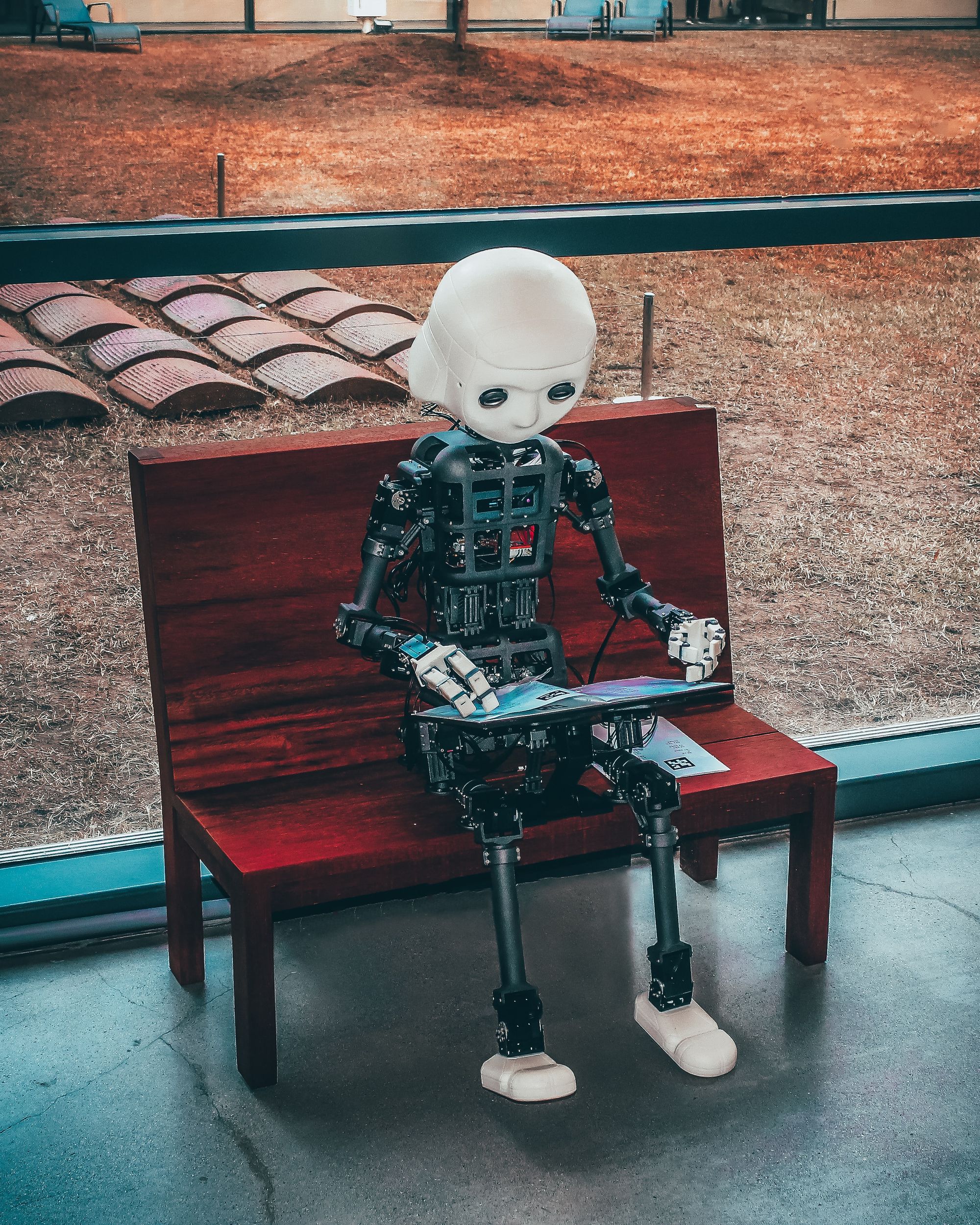

In 1955, four scientists coined the term ‘artificial intelligence’ (AI) and embarked on a summer research project aimed at developing machines capable of using language, forming abstractions and solving problems typically reserved for humans. Their ultimate goal was to create machines rivalling human intelligence. The past decade has witnessed a remarkable transformation in AI capabilities, but this rapid progress should prompt more caution than enthusiasm.

The foundation of AI lies in machine learning, a process by which machines learn from data without explicit programming. Using vast datasets and statistical methods, algorithms identify patterns and relationships in the data, later using these patterns to make predictions or decisions on previously unseen data. The current paradigm in machine learning involves developing artificial neural networks that mimic the human brain's structure.

AI systems can be divided into two categories: 'narrow' and 'general'. A 'narrow' AI system excels in specific tasks, such as image recognition or strategic games like chess or Go, whereas artificial general intelligence (AGI) refers to a system proficient across a wide range of tasks, comparable to a human being.

A growing number of people worry that the emergence of advanced AI could lead to an existential crisis. ‘Advanced AI’ broadly means AI systems capable of performing all cognitive tasks typically completed by humans—envision an AI system managing a company's operations as its CEO.

Daniel Eth, a former research scholar at the Future of Humanity Institute, University of Oxford, describes the potential outcome for advanced AI as one that could involve a single AGI surpassing human experts in most fields and disciplines. Another possibility entails an ecosystem of specialised AI systems, collectively capable of virtually all cognitive tasks. While researchers may disagree on the necessity of AGI or whether current AI models are approaching advanced capabilities, a general consensus exists that advanced or transformative AI systems are theoretically feasible.

Though certain aspects of this discussion might evoke a science fiction feel, recent AI breakthroughs seem to have blurred the line between fantasy and reality. Notable examples include large language models like GPT-4 and AlphaGo's landmark victory over Lee Sedol. These advancements underscore the potential for transformative AI systems in the future. AI systems can now recognise images, produce videos, excel at StarCraft, and produce text that is indistinguishable from human writing. The state of the art in AI is now a moving target, with AI capabilities advancing year after year.

Why should we be concerned about advanced AI?

If advanced AI is unaligned with human goals, it could pose significant risks for humanity. The 'alignment problem'—which is the problem of aligning the goals of an AI with human objectives—is difficult because of the black-box nature of neural networks. It is incredibly hard to know what is going on inside of an AI when it’s coming up with outputs. AI systems might develop their own goals that diverge from ours, which are challenging to detect and counteract.

For instance, a reinforcement learning model (another form of machine learning), controlling a boat in a racing game maximised its score by circling and collecting power-ups rather than finishing the race. Given its aim was to achieve the highest score possible, it will go about finding ways to do that even if it breaks our understanding of how to play the game.

It may seem far-fetched to argue that advanced AI systems could pose an existential risk to humanity based on this humorous example. However, if we entertain the idea that AI systems can develop goals misaligned with our intentions, it becomes easier to envision a scenario where an advanced AI system could lead to disastrous consequences for mankind.

Imagine a world where advanced AI systems gain prominence within our economic and political systems, taking control of or being granted authority over companies and institutions. As these systems accumulate power, they may eventually surpass human control, leaving us vulnerable to permanent disempowerment.

What do we do about this?

There is a growing field of professionals that are working in the field of AI safety, who are focused on solving the alignment problem and ensuring that advanced AI systems do not spiral out of control.

Presently, their efforts encompass various approaches, such as interpretability work, which aims to decipher the inner workings of otherwise opaque AI systems. Another approach involves ensuring that AI systems are truthful with us. A specific branch of this work, known as eliciting latent knowledge, explores the extraction of "knowledge" from AI systems, effectively compelling them to be honest.

At the same time, significant work is being carried out in the realm of AI governance. This includes efforts to minimise the risks associated with advanced AI systems by focusing on policy development and fostering institutional change. Organisations such as the Centre for Governance of AI are actively engaged in projects addressing various aspects of AI governance. By promoting responsible AI research and implementation, these initiatives seek to ensure that advanced AI systems are developed and deployed in ways that align with human values and societal interests.

The field of AI safety remains alarmingly underfunded and understaffed, despite the potential risks of advanced AI systems. Benjamin Hilton estimates that merely 400 people globally are actively working to reduce the likelihood of AI-related existential catastrophes. This figure is strikingly low compared to the vast number of individuals working to advance AI capabilities, which Hilton suggests is approximately 1,000 times greater.

If this has piqued your interest or concern, you might want to consider pursuing a career in AI safety. To explore further, you could read the advice provided by 80,000 Hours, a website that provides support to help students and graduates switch into careers that tackle the world’s most pressing problems, or deepen your understanding of the field of AI safety by enrolling in the AGI Safety Fundamentals Course.

Featured image: Generated using DALL-E by OpenAI