By Wing Wong, first year Chemistry

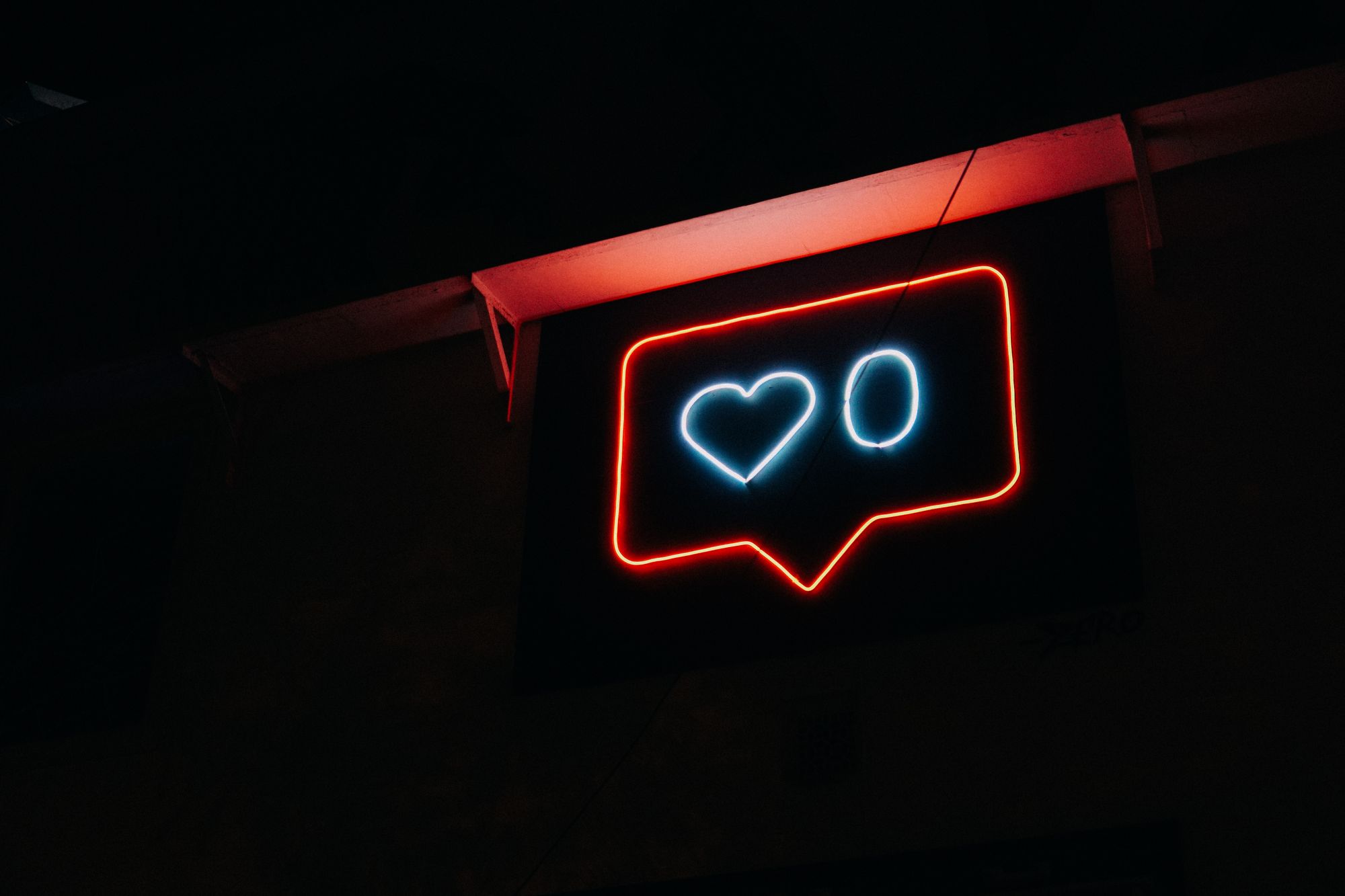

Facebook and Instagram may have to remove ‘Like’ buttons for children in the UK.

In an increasingly digital age, can the effects of social media on our lives go too far? Under a new code of practice proposed by the Information Commissioner's Office (ICO), under-18s on Facebook, Snapchat and other platforms may face restrictions on features like “likes” or “streaks” in a bid to set a global standard for children’s privacy online.

These “nudge techniques” are commonly used for profiling and targeted advertising; liking seemingly innocuous things can determine sensitive information like religion and sexuality. As a result, we are shown content that is algorithmically tailored to who we appear to be. Other forms of these techniques include increasing the attractiveness of a lower privacy setting in comparison to a higher privacy option to collect additional personal data.

Under the new 16-rule code, nudge techniques cannot be used to persuade children to provide unnecessary personal data, weaken their privacy protection or extend their use of the service. Other standards include limiting how data is collected, used and shared, defaulting to the “highest privacy” setting, with geolocation services disabled as a default and implementing “robust age-verification mechanisms”.

Amid the burgeoning distrust between the public and tech companies over personal privacy and data leaks, these measures aim to provide children with online access without being tracked or monetised with their personal data. In a world where teenagers can be radicalised through internet propaganda, online security is a key priority. Earlier this month, Prince Harry commented that "social media is more addictive than drugs and alcohol, and it's more dangerous because it's normalised and there are no restrictions to it.”

The recent scrutiny that Facebook has experienced illustrates the unforeseen consequences that can arise through rapid technological advancement, and living in this transition state, it is important to educate and monitor the benefits, risks and costs of how technology is used.

“There has to be a balancing act: protecting people online while embracing the opportunities that digital innovation brings, " Information Commissioner Elizabeth Denham said in a statement. “In an age when children learn how to use a tablet before they can ride a bike, making sure they have the freedom to play, learn and explore in the digital world is of paramount importance.”

A consultation on the draft regulations has been launched until the 31st of May; following approval, the code of practice is expected to be implemented by 2020. Failure to comply could incur a fine of up to 4% of a company’s global turnover, under the European Union’s General Data Protection Regulation (GDPR).

A study on teens aged 13-18 conducted by the UCLA Brain Mapping Centre revealed increased activity in the nucleus accumbens (a region of the brain’s reward circuitry that is thought to be particularly sensitive during adolescence) from receiving a high number of social media likes. Teens were also highly influenced to favour posts which were endorsed by their peers, irrespective of the content; demonstrating the effect of herd mentality.

Though preliminary, there is growing evidence to suggest a link between heavy technology of use and unhappiness. On average, 16 to 24-year-olds spend 3 hours on social media per day; equivalent to an entire decade over a lifetime. Whether it’s the fear of missing out or feeling like you’re in the only person in the world not doing something, social media can have detrimental effects. We live in a perpetual state of interruption; the relentless onslaught of information can easily overwhelm and disorientate. Does the onus for change lie entirely in the hands of tech companies? It is incredibly difficult to attribute responsibility when the effects are so amorphous. Where does personal responsibility begin? Is this technology being designed for humans to use, or are we being manipulated by the technology itself instead?

There is a common narrative that technology is neutral, and that it is up to us to decide how we use it. However, algorithms are continuously influencing the behaviour of our society; companies are spending millions on developing techniques to orchestrate our psychological biases, embedding manipulative techniques in the infrastructure. An attention economy has evolved, whereby programmers are all competing to create the most popular time-waster. A world of falsity is cultivated, yet our thoughts, values and beliefs are shaped by these very platforms. Short-term, dopamine-driven feedback loops are key to creating habits that become bona fide addictions for many; we’re all addicted to some degree. There is a danger of creating isolated, alienated individuals who connect to one another through products and never directly. Still, the paradox is that we derive tremendous value from social media, and it can undoubtedly be a force for good. However, we need to eliminate the reliance on arguably unethical economic models that incentivise calculated behaviour manipulation.

The way we connect is changing; screen to screen interactions are becoming the norm, and social media is a necessary component of modern interaction. Nonetheless, there are complex challenges ahead regarding privacy and regulation, and an urgent need to develop technology that is compatible with human wellbeing. However, I am profoundly optimistic in our potential to make progress and engage with technology on our own terms. We should not surrender power and algorithms to agendas that don’t have our best interest at heart.

Featured Image: Flickr / George Pagan III

Want to get involved? Get in touch!